Haro contre la tech US, la culture de la sécurité passoire en France, et plus...

Dépendance à la tech US, souveraineté numérique, culture de la sécurité défaillante en France et veille critique sur l’actualité tech européenne.

Dépendance à la tech US, souveraineté numérique, culture de la sécurité défaillante en France et veille critique sur l’actualité tech européenne.

Pas des prédictions, encore moins des promesses : six scénarios plausibles pour comprendre où glisse lentement la technologie - et ce que cela change pour nos usages quotidiens.

Comment et pourquoi migrer son blog Jekyll vers Quarkus Roq

What's new in pip 26.1 - lockfiles and dependency cooldowns! Richard Si describes an excellent set of upgrades to Python's default pip tool for installing dependencies. This version drops support for Python 3.9 - fair enough, since it's been EOL since October. macOS still ships with python3 as a default Python 3.9, so I tried out the new Python version against Python 3.14 like this: uv python install 3.14 mkdir /tmp/experiment cd /tmp/experiment python3.14 -m venv venv source…

Introducing talkie: a 13B vintage language model from 1930 New project from Nick Levine, David Duvenaud, and Alec Radford (of GPT, GPT-2, Whisper fame). talkie-1930-13b-base (53.1 GB) is a "13B language model trained on 260B tokens of historical pre-1931 English text". talkie-1930-13b-it (26.6 GB) is a checkpoint "finetuned using a novel dataset of instruction-response pairs extracted from pre-1931 reference works", designed to power a chat interface. You can try that out here. Both models are…

Nouveau

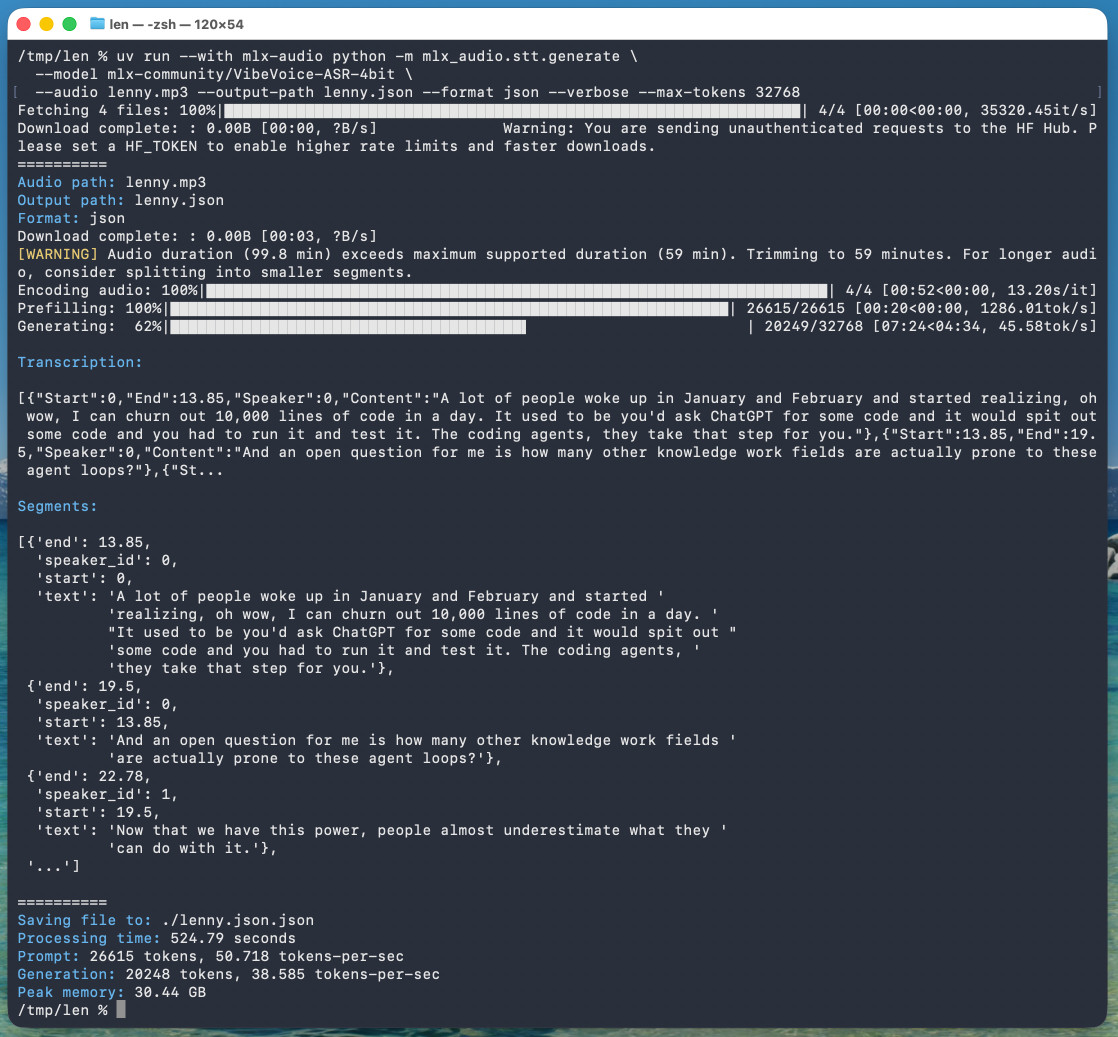

Nouveau microsoft/VibeVoice VibeVoice is Microsoft's Whisper-style audio model for speech-to-text, MIT licensed and with speaker diarization built into the model. Microsoft released it on January 21st, 2026 but I hadn't tried it until today. Here's a one-liner to run it on a Mac with uv, mlx-audio (by Prince Canuma) and the 5.71GB mlx-community/VibeVoice-ASR-4bit MLX conversion of the 17.3GB VibeVoice-ASR model, in this case against a downloaded copy of my recent podcast appearance with Lenny…

Nouveau

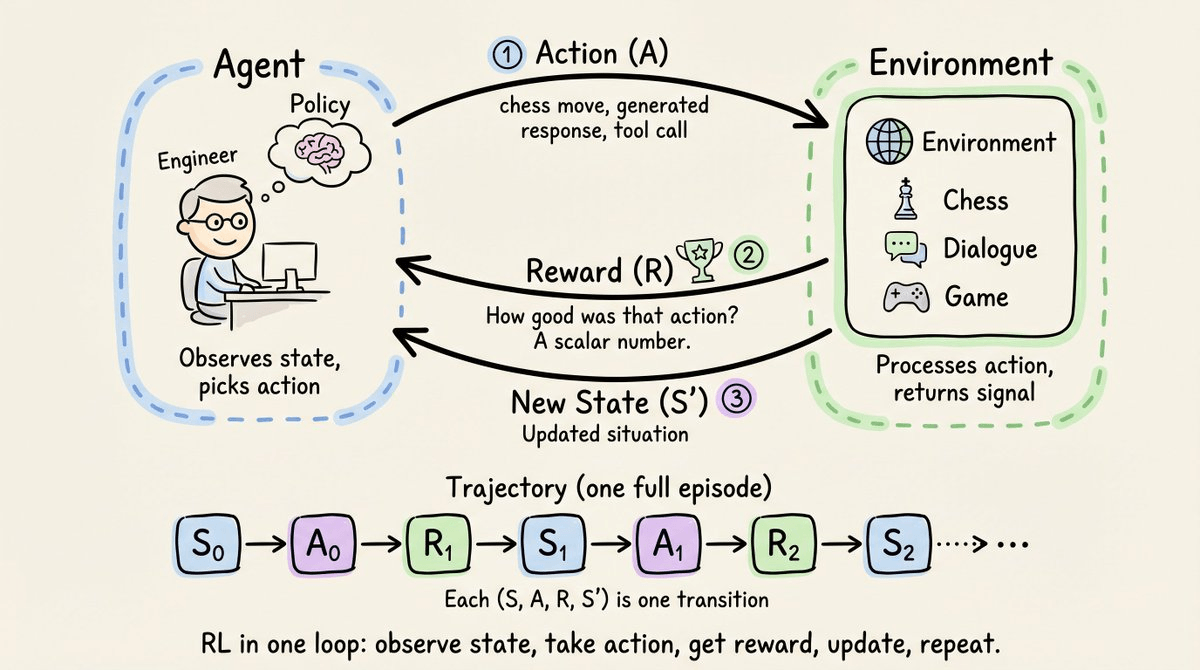

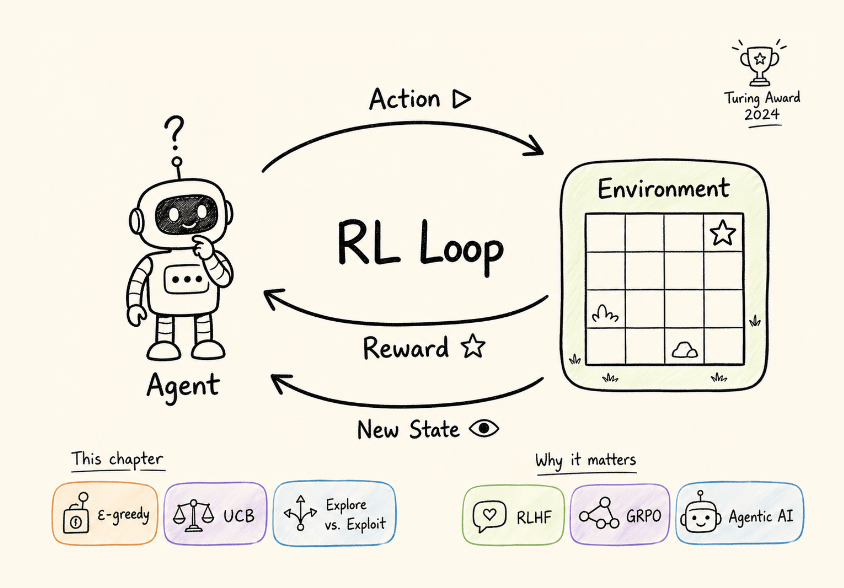

Nouveau The era of not writing custom reward functions.

For many years, Microsoft and OpenAI's relationship has included a weird clause saying that, should AGI be achieved, Microsoft's commercial IP rights to OpenAI's technology would be null and void. That clause appeared to end today. I decided to try and track its expression over time on openai.com. OpenAI, July 22nd 2019 in Microsoft invests in and partners with OpenAI to support us building beneficial AGI (emphasis mine): OpenAI is producing a sequence of increasingly powerful AI technologies,…

Speech translation in Google Meet is now rolling out to mobile devices I just encountered this feature via a "try this out now" prompt in a Google Meet meeting. It kind-of worked! This is Google's implementation of the ultimate sci-fi translation app, where two people can talk to each other in two separate languages and Meet translates from one to the other and - with a short delay - repeats the text in your preferred language, with a rough imitation of the original speaker's voice. It can only…

Nouveau

Nouveau A colleague told me something recently that I keep thinking about. She said, unprompted, that she appreciated seeing both sides of my AI conversations. Not just the output. The full thread. My prompts, the AI’s responses, the back and forth, the dead ends, the iterations. She said it made her trust me more. This piece […]

Récent

Récent  Récent

Récent @scottjla on Twitter in reply to my pelican riding a bicycle benchmark: I feel like we need to stack these tests now I checked to confirm that the model (ChatGPT Images 2.0) added the "WHY ARE YOU LIKE THIS" sign of its own accord and it did - the prompt Scott used was: Create an image of a horse riding an astronaut, where the astronaut is riding a pelican that is riding a bicycle. It looks very chaotic but they all just manage to balance on top of each other Tags: text-to-image,…

Since GPT-5.4, we’ve unified Codex and the main model into a single system, so there’s no separate coding line anymore. GPT-5.5 takes this further, with strong gains in agentic coding, computer use, and any task on a computer. — Romain Huet, confirming OpenAI won't release a GPT-5.5-Codex model Tags: generative-ai, gpt, openai, ai, llms

GPT-5.5 prompting guide Now that GPT-5.5 is available in the API, OpenAI have released a wealth of useful tips on how best to prompt the new model. Here's a neat trick they recommend for applications that might spend considerable time thinking before returning a user-visible response: Before any tool calls for a multi-step task, send a short user-visible update that acknowledges the request and states the first step. Keep it to one or two sentences. I've already noticed their Codex app doing…

Release: llm 0.31 New GPT-5.5 OpenAI model: llm -m gpt-5.5. #1418 New option to set the text verbosity level for GPT-5+ OpenAI models: -o verbosity low. Values are low, medium, high. New option for setting the image detail level used for image attachments to OpenAI models: -o image_detail low - values are low, high and auto, and GPT-5.4 and 5.5 also accept original. Models listed in extra-openai-models.yaml are now also registered as asynchronous. #1395 Tags: gpt, openai, llm